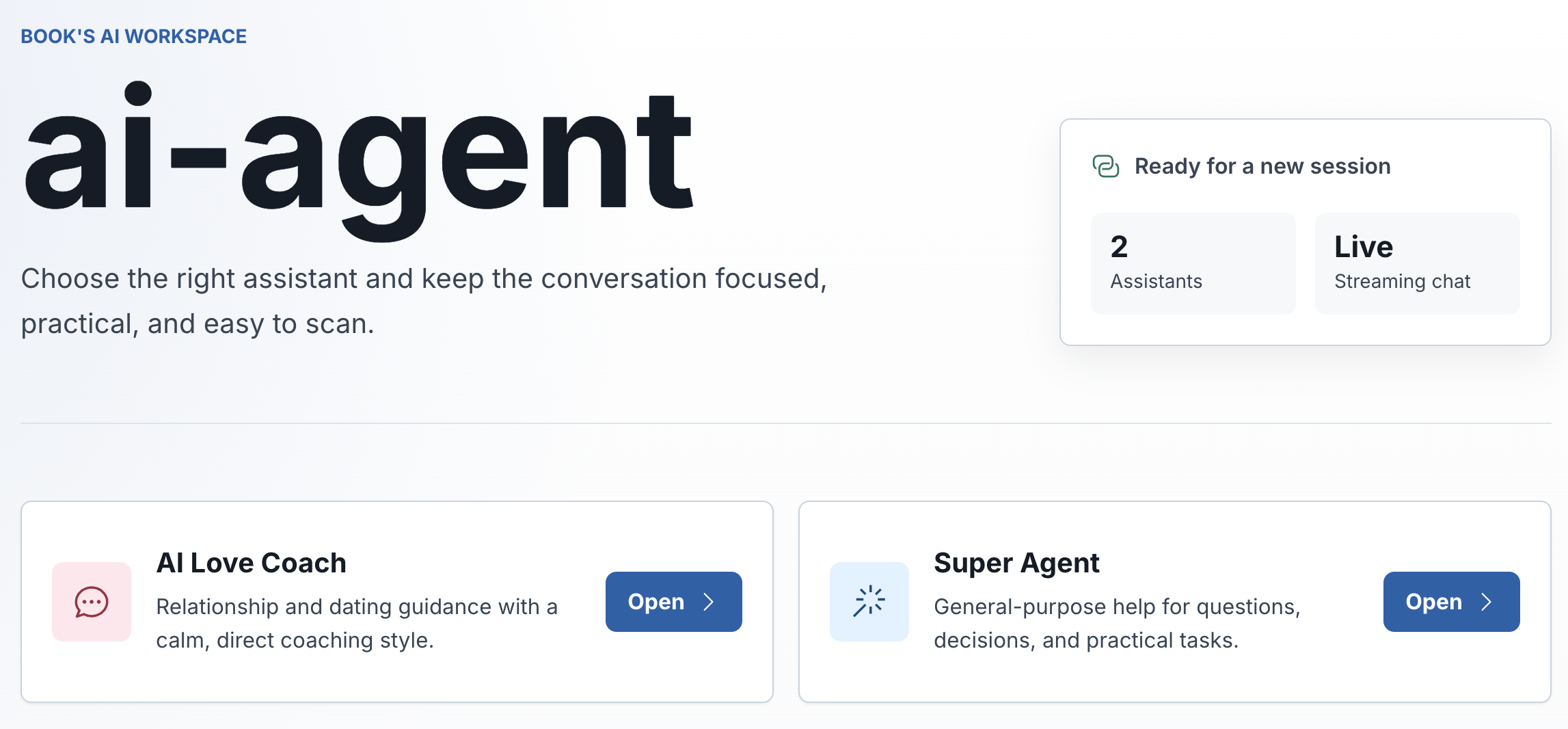

AI Agent Project

I recently finished a course on AI application development and wanted to write up what I actually learned. Not a review of the course — more just the concepts that clicked, the parts that were harder than expected, and what I ended up building.

About the project

What the project was

The course had me build two things. First, a conversational AI application called AI Love Master — essentially a chatbot backed by a custom knowledge base. The interesting part wasn't the chatbot itself but how it works: instead of the model answering purely from its training, it searches a vector database of documents first and passes the relevant chunks as context. That's called RAG (retrieval-augmented generation), and understanding it properly took me a while.

The second project was OpenManus, an autonomous agent. You give it a goal and it figures out the steps on its own — searching the web, downloading resources, generating a PDF, all without you telling it what to do at each step. It's based on a pattern called ReAct (reasoning + acting), where the agent alternates between thinking about what to do next and actually doing it.

Both are built in Java with Spring AI, which I hadn't used before this project.

The stuff that actually made sense once I built it

RAG is simpler than it sounds. The basic idea: take your documents, split them into chunks, convert each chunk to a vector (a list of numbers that captures the meaning), store those vectors. When a user asks something, convert their question to a vector too, find the closest matching chunks, and include them in the prompt. The model then answers based on that context rather than just its training data.

Rendering diagram…

In practice, getting the chunking strategy right matters more than I expected. Too large and you're passing irrelevant context to the model. Too small and you lose the surrounding information that makes a chunk useful.

Tool calling is cleaner than I imagined. The model doesn't actually call your tools directly. It returns a structured response saying "I want to call function X with these parameters." Your application intercepts that, runs the actual function, and feeds the result back. The model never touches your filesystem or APIs — it just requests things and gets results.

Rendering diagram…

The ReAct pattern is elegant. The agent runs in a loop: reason about what to do, pick a tool, call it, observe the result, reason about what to do next. It keeps going until it decides it's done. The tricky part is handling failures gracefully — when a tool fails, the agent needs to adapt rather than get stuck in a loop.

Rendering diagram…

What was harder than expected

RAG tuning. Getting basic RAG working is straightforward. Getting it to work well — good recall, relevant results, not returning garbage — involves a lot of decisions around chunking size, overlap, metadata filtering, and retrieval strategies. Hybrid search (combining semantic similarity with keyword matching) works better for technical content than pure vector search.

Prompt reliability. Early versions of the chatbot would produce inconsistent outputs depending on minor wording changes in the input. Systematic prompt testing — trying variations, checking edge cases — is not glamorous but it's necessary.

What I'd do differently

The knowledge base content I used was pretty thin. A richer, better-organized set of documents would have made the RAG system much more interesting to test. I also didn't spend enough time on the agent's error recovery — the happy path works fine but edge cases expose a lot of rough spots.

Stack

Java 21, Spring Boot 3, Spring AI, LangChain4j, PostgreSQL + PGvector, Ollama

Key outcomes

Bounded tools and explicit workflow design.

Focused on useful orchestration rather than hype.